It’s hard to escape the idea that AI is our inevitable future. Yet, a recent story on Twitter X reminded people why the ‘human element’ isn’t just a buzzword but a necessity we’re about to miss. A developer had given an autonomous AI agent complete control over his system, full access to his files, and everything. Not long after, the AI messed up a command. Instead of clearing a specific cache, it deleted the entire root directory. Years of work, just disappearing, right in front of him. This disaster was averted by a reliable backup system, which enabled him to restore the data in full. Still, can you imagine watching all your hard work vanish just because a tool followed your instructions a little too literally?

That’s exactly why we need Human-Centred AI (HCAI), an emerging discipline focused on creating AI systems that amplify and augment, rather than displace, human capabilities. HCAI seeks to preserve human control to ensure that artificial intelligence meets our needs while operating transparently, delivering equitable outcomes, and respecting privacy.

Last week, an Anthropic article, ‘How AI assistance impacts the formation of coding skills,’ revealed a looming existential crisis for developers. The study found that developers who offload their thinking to AI, delegating the entire task rather than using it as a partner, scored 17% lower on technical mastery tests than those who worked manually. As we rush for speed, we are inadvertently “stunting” the very human expertise needed to supervise these machines. If we simply hand the keys to an adolescent technology, we stop building the deep problem-solving skills required for high-stakes decisions.

Dario Amodei, Anthropic’s CEO, summed it up in his essay, ‘The Adolescence of Technology.’ He wrote, and I quote, “I believe we are entering a rite of passage, both turbulent and inevitable, which will test who we are as a species. Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it.” This isn’t just theoretical. We’re already seeing what happens when we lean too hard on automation without human oversight.

But even with these growing pains, people clearly want more collaboration, not less. Take a look at what’s happening with Claude.co. In professional circles, people are flocking to it because it feels more like a teammate, more transparent and explainable, and less like a black box. Claude is consistently praised in G2 reviews for its human-like tone and natural reasoning. People don’t want to be replaced; rather, they want to be accelerated by a partner that is transparent and accountable. They want a system that allows them to audit the logic before committing to outcomes that affect their decisions.

At its core, AI is just a chain of mathematical models. Think of it as a jet engine blazing fast but completely aimless without a pilot. The City Business Handbook, ‘A Human-Centred Future’, emphasises: “Pure automation is a race to the bottom. When you replace human judgment with a black-box algorithm, you lose the ability to navigate the edge cases.” Their point is simple: technology shouldn’t push us out; it should make us better. AI should be a cognitive exoskeleton that sharpens our judgment, strengthens our ethics, and widens our reach.

The handbook points out that there’s a big difference between Type 1 and Type 2 decisions. AI handles Type 2 stuff well, that is, the quick, reversible things like sorting data. But when it comes to Type 1 decisions, the ones you can’t take back, the big calls that really matter, human instinct takes over. That’s where humans step in, because machines don’t deal with the consequences; we do.

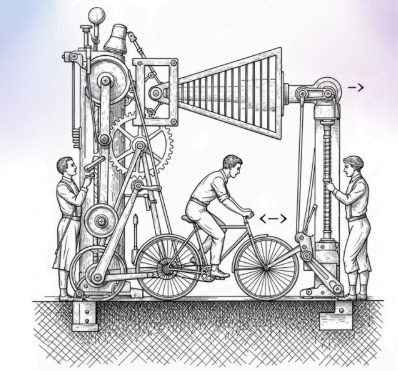

Remember Steve Jobs’ “bicycle for the mind” idea? A bike makes you faster, sure, but you still have to steer, balance, and decide where you’re going. That’s how AI should work. Let it crunch the data and handle the grunt work, while we humans take on the high-level thinking and ethical calls, which only we can do.

The organisations that really understand what’s happening are starting to care less about how many tasks get done and more about how good their decisions are. They know it’s not just about speed or efficiency anymore; it’s about judgment. If you want to keep up, you have to keep learning; this is not optional. Upskilling ensures we, as humans, can keep up and evolve to remain strategic and lead. This means we can remain in the driver’s seat while AI remains the engine.